How Sentinel Uses TruthScan to Stop AI-Powered Marketplace Fraud

AT A GLANCE

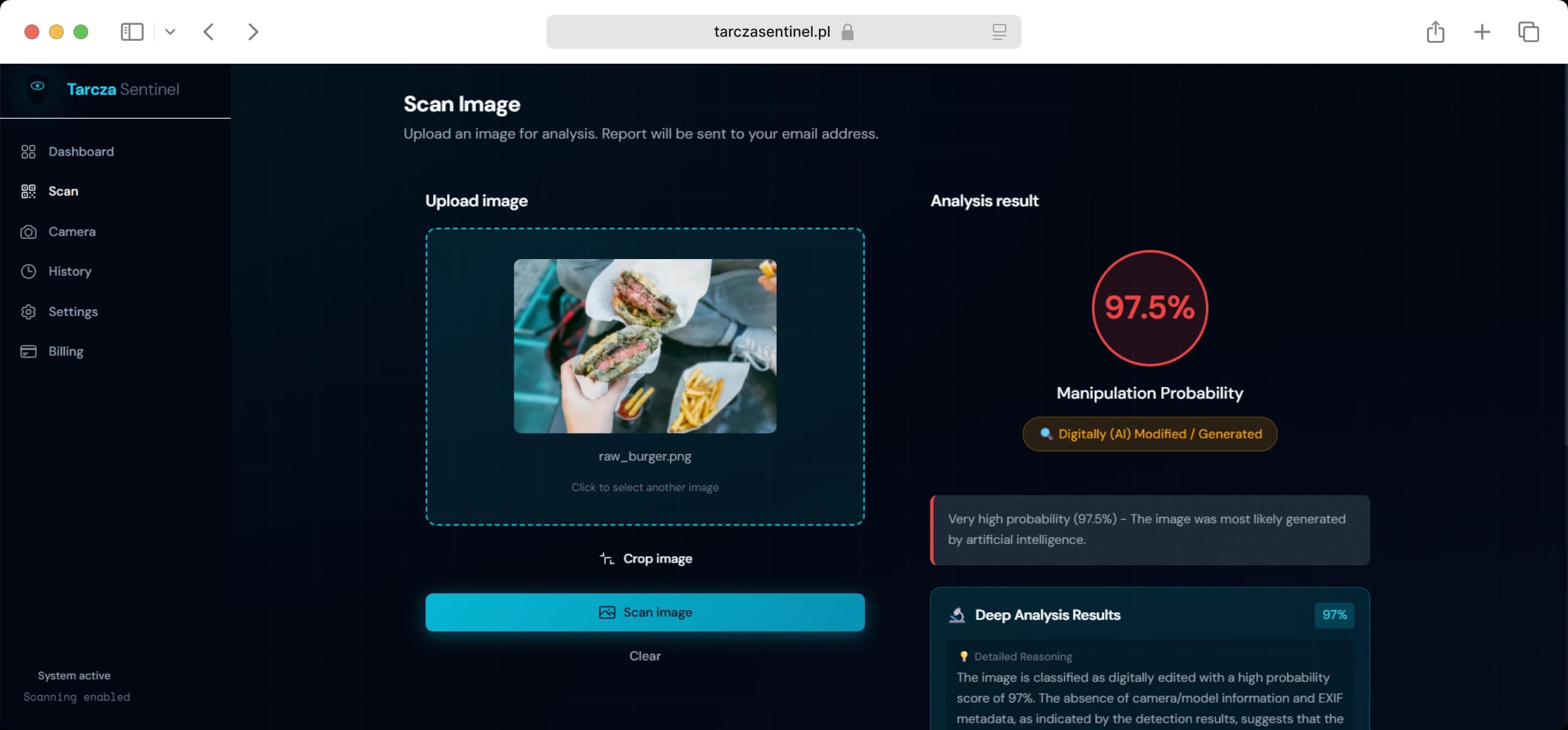

Scammers on Allegro, one of Europe's largest online marketplaces, use AI to edit real product photos — adding fake stains, scratches, or damage — to file false refund claims. Sentinel detects these edits. Founder Piotr Szmielew tested systems from OpenAI and Hive. Both failed. OpenAI flagged real images as fake. Hive never responded to repeated contact.

Sentinel added TruthScan as the last step in its detection pipeline. TruthScan returned a definitive result on nearly every image tested. Szmielew says TruthScan accounts for 90% of his platform's detection capability. Without it, Sentinel can't reliably identify AI-modified photos.

KEY WINS

of AI modification detection capabilities are powered directly by TruthScan.

Near 0%False Positive rate achieved in real-world testing (vs. OpenAI's high failure rate)

14 Daysfrom feature request to deployment, proving exceptional partner agility.

The Problem: One Wrong Call Kills the Product

Sentinel's pipeline catches fully AI-generated images. That's the easy problem. The hard problem is detecting small AI edits to real photos (e.g., a digitally added stain on a real jacket, or a scratch composited onto a real screen). That's the fraud type driving false refund claims on Allegro, and it's where every other detector Sentinel tested failed.

In fraud detection, every result is binary: real or fake. If Sentinel flags a legitimate buyer as a fraudster, the marketplace loses that buyer's trust. Do it twice, and the marketplace drops Sentinel. According to Szmielew:

"False positives are actually ruining the whole idea... especially if you're running a pipeline of detectors. If you get a false positive, then you don’t really know what to do."

Sentinel needed a detector accurate enough to catch single-pixel-level AI modifications without misidentifying real photos.

What Sentinel Tested — and Why Each Failed

Piotr stress-tested three major solutions. The data showed that standard AI detectors could not support a viable commercial product.

- OpenAI Vision flagged legitimate images as fake. In one batch test of three images, it misidentified the first image — a real, unaltered photo. Sentinel stopped the evaluation. A detector that fails on authentic images cannot be sold to a marketplace where buyers expect accurate rulings.

- Sightengine detected fully AI-generated images at a reasonable cost. But it couldn't detect AI modifications to real photos — the specific fraud type Sentinel was built to catch. A tool that misses the core problem is not a cheaper option. It is the wrong tool.

- Hive Moderation never made it to testing. Hive published no pricing. Sentinel's team sent three or four emails and received no response. Sentinel moved on.

After these results, Sentinel began testing TruthScan across a larger set of real-world fraud samples. Szmielew said the team's responsiveness made the difference:

"The company is amazing. I had a feature request, and the CEO responded to me, and, like, two weeks later it was there. So that was a very, very pleasant experience."

How Sentinel Uses TruthScan

Sentinel runs multiple detectors in sequence. Most catch obvious fakes — like fully generated images with known manipulation patterns. When an image passes those filters and the result remains uncertain, TruthScan makes the final call.

Szmielew explained the role clearly:

"There is the reason why TruthScan is there as the final model in the pipeline... If the previous results are inconclusive, then TruthScan is the final judge."

"Other tools are good as a first line... but when it comes to big guns, TruthScan is there at the end to solve everything."

Sentinel built its pipeline this way after testing TruthScan against a larger dataset of real fraud cases with additional stakeholders. TruthScan was the only tool that reliably identified AI modifications to real photos — the layer of fraud that other detectors missed.

Results

One inconclusive result across all testing. Across Sentinel's full test set, TruthScan returned an uncertain result once. Every other image received a definitive ruling (real or fake).

90% of Sentinel's detection capability depends on TruthScan. Szmielew said that removing TruthScan would cut his platform's ability to detect AI-modification fraud by 90%. The other tools in Sentinel's pipeline handle basic cases. TruthScan handles the rest.

No procurement delays. Sentinel integrated TruthScan without waiting for sales approvals or API access — processes that stalled evaluations with Hive. This allowed Sentinel to keep scaling as demand increased.

In Szmielew's own words:

"It's so easy to integrate... the risk is very low... if you're looking to detect AI image modification, I think that's the good tool for the job right now."

What Comes Next

Sentinel now sells a fraud detection product that returns a definitive result on nearly every image tested. TruthScan provides 90% of that capability. OpenAI and Hive could not.

Sentinel was accepted into the Google Cloud for Startups program. The TruthScan integration was a factor in that acceptance. And now, Sentinel is expanding beyond its initial marketplace clients.

Competitive Performance Analysis

The following data summarizes Sentinel's internal evaluation of the competitive landscape.

| Feature/Metric | OpenAI | Sightengine | Hive | TruthScan |

|---|---|---|---|---|

| False Positive Rate | High (~33% in early tests) | Low (But limited scope) | Unknown (No Access) | <1% (Near Zero) |

| Modification Detection | Unreliable | Ineffective | Unknown | High Accuracy |

| Time to Access | Immediate | Immediate | Weeks/Never | Immediate |

| Role in Pipeline | Rejected | First-Pass Filter | Rejected | Final Judge |

Build With a Partner, Not Just a Provider.

From 2-week feature turnarounds to transparent pricing, see why Sentinel's founder calls TruthScan the "final judge" for his pipeline

Request Demo