In the 90s, the courtroom was revolutionized by DNA. By the 2000s, cell tower pings became the definitive way to place a person at a crime scene.

But in 2026, the new gold standard of evidence is the digital fingerprint. We have entered a high-stakes era where a single video can make or break a multi-million dollar settlement or a criminal conviction, but only if its biological and digital origin can be proven.

A fascinating reality of modern litigation is that the human eye is no longer the ultimate judge of truth. In fact, research shows that humans can only identify deepfakes with a success rate slightly better than a coin flip.

Think of it this way: every video file has a unique signature. If even a single pixel is altered by AI, that fingerprint changes instantly.

If you attempt to detect ai-generated video evidence without a verified forensic layer to lock that fingerprint in place, the footage may be deemed inadmissible in the eyes of a modern judge. The era of “seeing is believing” is officially over.

We have entered the stage of AI video verification, where proof is found in the hidden digital code beneath the surface. In this guide, we explore why traditional evidence is failing, how to spot manipulated clips, and why verification technology is now a non-negotiable asset for legal teams.

Key Takeaways

- In 2026, legal standards require all video evidence to undergo forensic AI screening to ensure it hasn’t been tampered with or synthetically generated.

- Video fraud includes subtle edits like timestamp manipulation and object removal, making comprehensive file analysis essential for discovery.

- Professional deepfake detectors are the only tools capable of catching the microscopic mathematical patterns that AI leaves behind during the creation process.

- Automated evidence analysis allows firms to process massive volumes of surveillance and smartphone footage with enterprise-grade accuracy.

- TruthScan protects legal integrity by offering real-time detection of synthetic masks and voice clones during live remote hearings.

What Are AI-Generated Video Evidence

In legal proceedings, video evidence is the most persuasive tool available to a jury, yet it is also the most vulnerable to high-tech exploitation.

Unlike traditional physical evidence, digital files can be rewritten at the pixel level to create entirely new “realities.”

Synthetic courtroom footage represents a massive threat to the judicial process. This involves videos created from scratch where a witness or defendant is shown saying things they never actually uttered.

Never Worry About AI Fraud Again. TruthScan Can Help You:

- Detect AI generated images, text, voice, and video.

- Avoid major AI driven fraud.

- Protect your most sensitive enterprise assets.

For example, in a recent California order involving Mendones v. Cushman & Wakefield, a witness video was discovered to be a complete deepfake, leading the court to strike the evidence and question the trust of future submissions.

Then there are manipulated surveillance videos. Fraudsters don’t always create a new video; sometimes they just “clean up” the old one. This includes removing a specific person from a frame or inserting an object to change the context of a crime.

Finally, AI-altered legal recordings focus on depositions and remote testimonies. Scammers use real-time deepfake filters to impersonate witnesses or hide their true identity during remote hearings, creating a crisis for legal authenticity standards across international jurisdictions.

Why AI Video Evidence Is Becoming a Legal Risk

The legal system was designed for a world where cameras captured physical light. Today, cameras capture data that can be reordered by an algorithm in seconds, turning every piece of digital proof into a potential liability.

Increased Deepfake Accessibility

Just two years ago, creating a convincing deepfake required a high-end computer and specialized skills. Today, anyone with a smartphone can generate a fake incident report for less than the price of a coffee.

This accessibility means that even small-claims cases are now flooded with synthetic proof that law firms are not traditionally equipped to handle.

Harder Evidence Verification

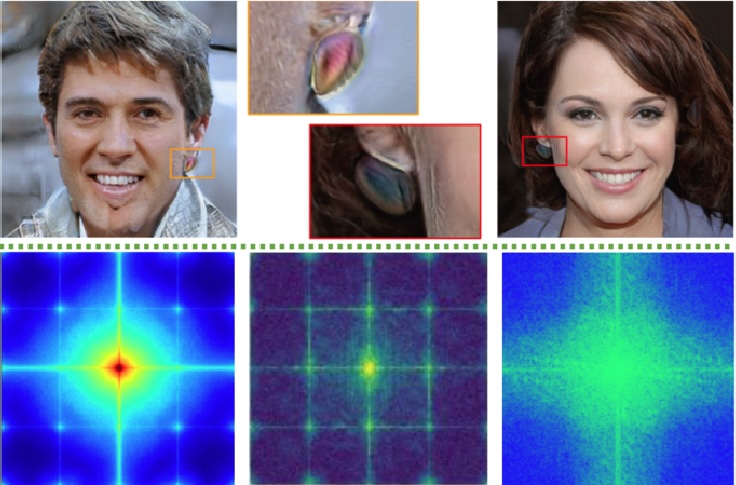

As AI models improve, the “uncanny valley” where fake videos look slightly wrong is disappearing.

We have reached a point where challenging AI-enhanced video evidence is a necessity because even “enhanced” body-cam footage might be inventing pixels that weren’t there in the original recording.

This makes it impossible for a human reviewer to verify authenticity without specialized software.

Growing Litigation Complexity

When one side claims a video is fake, the entire case grinds to a halt. The “deepfake defense” is becoming a standard tactic where lawyers argue that incriminating footage is just a well-made AI fabrication.

This adds layers of expert testimony and forensic costs that can balloon a firm’s litigation budget if they don’t have automated screening in place.

Common Types of AI-Generated Video Evidence

- Deepfake Witness Statements

These are the most high-profile threats where a person’s likeness and voice are cloned to deliver false testimony. These videos often look perfect but fail under microscopic sync analysis.

2 Altered Surveillance Footage Scammers use AI to loop background footage or remove incriminating movements. A thief might “mask” themselves out of a CCTV clip, making it look like a door opened and closed on its own.

3. Fabricated Incident Recordings Common in insurance fraud, these videos show accidents—like a slip and fall—that never happened. The AI blends the human subject into the environment so seamlessly that the physics of the fall appear natural.

4. AI-Generated Screen Captures Fraudsters create fake social media videos or news clips to support a narrative. These are often used in defamation or “wrongful firing” cases to provide false context for an employee’s behavior.

Recent Legal Cases Involving AI Video Evidence

The courts are quickly learning that they can no longer take video at face value. Several high-profile disputes have already set the stage for how AI evidence will be handled in the future.

Deepfake Political Footage Disputes

Political litigation has become a testing ground for deepfake laws. In 2025 and 2026, we saw multiple cases where political disinformation and deepfakes were used to manipulate public opinion or harass opponents.

Courts are now being asked to issue emergency injunctions to remove these videos before they can impact election or legal outcomes.

A defining example of this conflict is the ongoing legal battle involving Elon Musk and California’s AB 2839.

In late 2024 and throughout 2025, Musk’s platform, X, challenged the state’s attempt to regulate AI-generated political parodies, leading to a federal judge initially striking down the law on First Amendment grounds.

Source = LA Times

However, by early 2026, the litigation entered a new phase as California authorities sought to hold AI companies accountable for the spread of sexually explicit and deceptive deepfakes

generated by models like Grok.

This case proves that without a forensic standard to distinguish between satire and malicious disinformation, the judicial system remains vulnerable to “deepfake defenses” that can stall critical rulings for months.

Manipulated Surveillance Evidence

In criminal trials, the “AI Enhancement” issue is a recurring problem. A landmark ruling in California recently highlighted how AI-generated visuals and deepfakes can sabotage a case.

When a video is “upscaled” by AI to make a license plate clearer, the defense can argue the machine “guessed” the numbers, making the evidence unreliable.

Questions Around Digital Authenticity

The legal industry is currently grappling with the dangers of AI-generated evidence in everyday civil suits.

Whether it’s a divorce case involving a fake recording or a business dispute where a CEO’s video call was faked to authorize a wire transfer, the burden of proof is shifting toward the person who submits the video.

How Legal Teams Can Authenticate Video Evidence

Authentication is the bridge between a raw video file and a winning case. Legal teams must adopt a rigorous “zero-trust” workflow for every piece of digital media that enters their discovery package.

- Source File Validation Always request the original file from the device that recorded it. For example, if a client provides a WhatsApp video, you should go back and get the original raw file from the phone’s storage to check for native camera signatures.

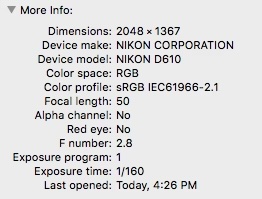

- Metadata Forensic Analysis Check the hidden EXIF data for any traces of editing software. An example of a red flag is a surveillance clip whose metadata shows it was exported from “CapCut” or “Adobe Premiere” just minutes before it was sent to counsel.

- Chain-of-Custody Verification Document every person who handled the file. Using a system like TruthScan allows you to create a “hash” or digital fingerprint of the video the moment you receive it, ensuring it hasn’t been touched throughout the trial.

- Cross-Reference Supporting Evidence Check the video against other facts. For example, if a dashcam video shows a sunny day but local weather reports say it was raining at that time, you have clear proof of a manipulated incident recording.

Types of Video Evidence Courts Use Every Day

As digital recording becomes a standard part of modern life, the variety of footage submitted as evidence continues to expand.

Because each source has different security standards, legal teams must treat each one with a specific level of forensic scrutiny to ensure authenticity.

Body-Worn Camera (BWC) Footage

Commonly used in criminal cases and civil rights litigation, body cameras provide a first-person perspective of interactions between law enforcement and the public.

While these systems often have built-in “chains of custody,” they are increasingly subject to questions about AI-driven “enhancements.” If a piece of footage is upscaled to clarify a suspect’s face or a weapon, the defense may argue that the AI is inventing pixels that were never captured by the lens.

Private Doorbell and Smart Home Video

Consumer-grade security cameras, such as Ring or Nest, have become a primary source of evidence in neighborhood disputes and domestic cases. Unlike professional systems, these files are often stored in the cloud and shared via links, which makes them highly susceptible to “cheapfake” edits.

A fraudster can easily use consumer-level software to change a timestamp or remove a specific person from the porch before downloading the clip to send to their attorney.

Dashcam and Fleet Telematics

Dashcam footage is the “silent witness” in car accidents and personal injury claims. Modern fleet cameras often overlay GPS data, speed, and G-force directly onto the video.

Verification is critical here because AI can be used to alter the “telemetry” text on the screen—making it look like a driver was going 35 mph when they were actually going 50 mph. Spotting these pixel-level overlays requires a deep scan for text-layer inconsistencies.

Social Media and Livestream Captures

In 2026, TikTok, Instagram, and “X” are often the first places evidence appears. This footage is notoriously difficult to authenticate because social media platforms compress and strip metadata from every upload.

Legal teams must use AI forensic tools to verify that a “viral” video hasn’t been edited with deepfake filters or re-contextualized from an older event in a different location.

Remote Deposition and Hearing Recordings

Since the shift toward virtual courtrooms, recorded testimonies have become a standard part of the legal record. However, this has created a window for “live” deepfake fraud.

Fraudsters have been caught using real-time voice clones and facial masks during remote depositions to impersonate witnesses. Detecting these anomalies requires real-time analysis of audio-visual synchronization and facial landmark tracking.

Questions to Ask Before Accepting Video Evidence

Before any video enters the record, it must pass a “vibe check” followed by a technical audit. Asking the right questions early can save a firm from the embarrassment of submitting a fake to the court.

| Question | Why It Matters | Potential Sample Answer/Outcome |

| Who created the footage? | Establishes the source and intent. | “A bystander recorded it on an iPhone 15.” (Verify with EXIF data). |

| Was metadata altered? | Shows if the file was re-saved or edited. | “Yes, the creation date is newer than the event.” (High risk flag). |

| Are visual artifacts present? | Indicates AI-blending or GAN noise. | “Strange blurring around the edges of the mouth.” (Reject evidence). |

| Does context align? | Checks the physics and environment. | “Lighting on the face doesn’t match the background.” (AI-generated). |

| Is the audio synced? | Cloned voices often have micro-delays. | “0.02ms delay found between phonetic sound and lip movement.” (Deepfake). |

AI Tools Used to Detect Manipulated Videos

To stay ahead of synthetic fraud, legal teams are turning to specialized platforms that can see what humans miss. These tools act as a forensic lab for digital evidence.

Deepfake Detector: Identify Synthetic Footage

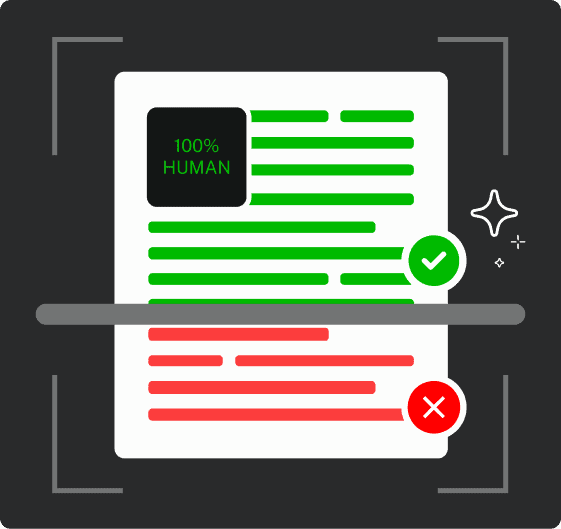

TruthScan’s Deepfake Detector is designed to find the microscopic mathematical patterns left behind by Generative Adversarial Networks (GANs).

While a deepfake might look perfect to a jury, this tool scans every frame for “noise” and pixel-level inconsistencies. It helps legal teams by providing a confidence score that can be used to justify whether a video should be admitted or challenged.

The primary benefit is peace of mind; you know exactly what you are putting in front of a judge.

These are specialized software programs that scan for GAN artifacts. These are microscopic, mathematical patterns or noise left behind by the AI models that generate synthetic faces. While a human sees a face, the detector sees a digital signature that doesn’t belong.

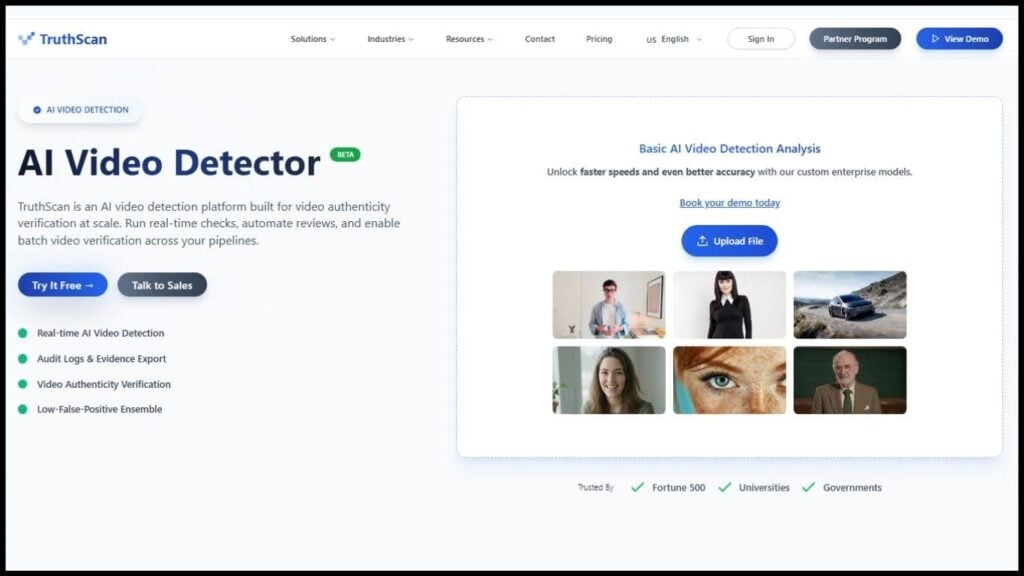

AI Video Detector: Analyze Video Authenticity

This tool goes beyond faces and looks at the entire frame to find signs of AI upscaling or manipulation. It analyzes lighting vectors and shadow consistency to see if an object like a weapon or a specific person—was digitally inserted into the scene.

For legal teams, this is essential for verifying surveillance footage where the fraud is often subtle. It helps ensure that the “enhanced” video your expert is using doesn’t contain invented pixels that could lead to a mistrial.

Frame-by-Frame Anomaly Detection

AI video generation often struggles with “temporal coherence,” meaning things might change slightly from one frame to the next.

TruthScan’s frame-by-frame analysis checks for these glitches, such as a flickering ear or a background that shifts unnaturally. This level of detail is impossible for a human to perform manually on a 10-minute clip but takes the AI only seconds.

This tool protects firms from “high-fidelity” fakes that are only visible when the footage is slowed down and mathematically analyzed.

Blockchain Timestamps

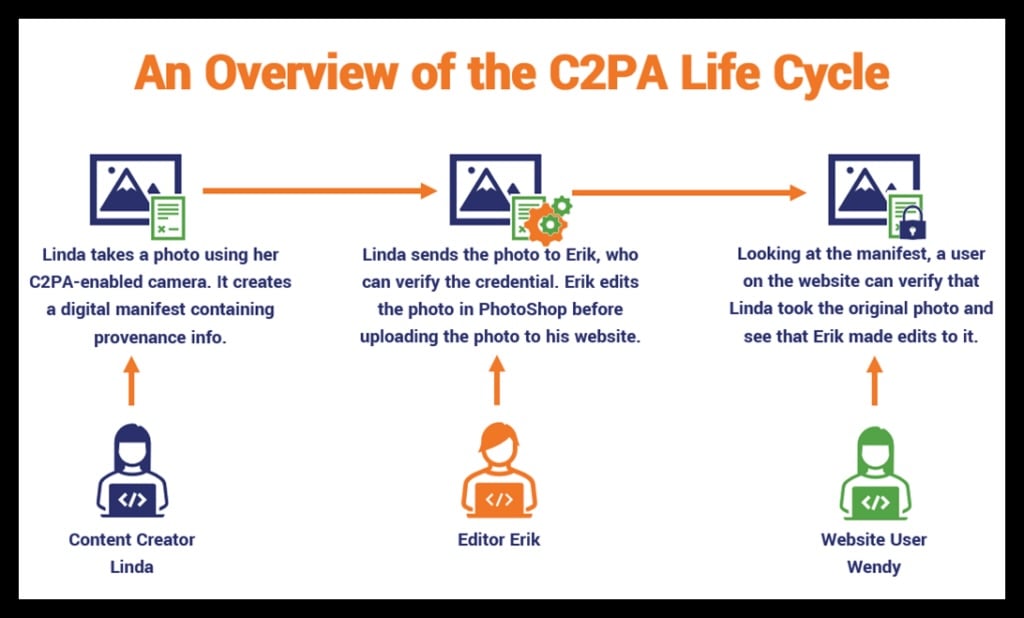

To prove a video hasn’t been touched since the moment it was recorded, many agencies now use the C2PA standard.

When a video is recorded on a compliant device, a unique digital fingerprint (hash) is created and stored on a blockchain.

Metadata Forensic Tools

Every digital file has a birth certificate known as Exif data. These tools check to see if a file was re-saved in AI editing software or if the location data (GPS) has been spoofed.

If a video claims to be from a store camera but the metadata shows it was exported from a video editor, you have a problem.

The Future of AI Video Evidence in Legal Disputes

The legal landscape is heading toward a mandatory verification era. As we move further into 2026, we are seeing rising evidentiary challenges as AI becomes the default tool for both creation and detection.

Courts are no longer satisfied with a witness saying “that looks like me”; they are demanding stronger authentication standards that include cryptographic timestamps and forensic audit logs.

This shift is leading to the rise of AI-driven forensic investigations as a standard part of the e-discovery process. Firms that ignore these trends risk not only losing cases but also facing sanctions for failing to vet their own evidence.

According to current research on the dangers of AI-generated evidence and its ethical implications, the legal community must adopt these technologies now to preserve the integrity of the justice system.

How TruthScan Strengthens Video Evidence Verification

TruthScan provides an enterprise-grade video analysis platform built specifically for high-stakes environments. It offers real-time authenticity detection, which is vital for remote depositions where you need to know now if the person on the screen is using a filter.

By providing scalable forensic workflows, TruthScan allows law firms to process thousands of discovery files simultaneously, ensuring that no piece of “cheapfake” or “deepfake” media slips through the cracks.

It generates court-ready PDF reports that document every step of the verification process, giving your firm a defensible audit trail for every piece of evidence.

Talk to TruthScan About Detecting AI Video Evidence

Protect your firm’s reputation and your client’s interests by closing the door on digital fraud. TruthScan enables you to protect legal investigations by identifying fakes before they ever reach a courtroom.

Our tools allow you to verify digital evidence with absolute confidence, helping you to reduce litigation risk and avoid the embarrassment of a struck witness or a dismissed case.

Try TruthScan to get free 20,000 credits and secure your next case with forensic-grade AI video verification.