Just a few years ago, you needed a professional studio and a lot of technical skill to mimic someone’s voice.

Now, anyone with an internet connection and some spare time can create a digital clone of anyone’s voice in less than a minute.

The tools for these attacks are becoming cheaper and more accessible every single day for everyone. And because so many people share their lives on social media, it is very easy for a scammer to find the audio they need.

In this article, we’ll walk you through some of the notorious real-world examples of audio fraud. We’ll also introduce you to a solution to put a timely end to these threats.

Key Takeaways

- Voice deepfake fraud attempts have surged by over 2,137% in recent years, fueled by the fact that modern AI can generate an 85% accurate voice clone using as little as three seconds of source audio.

- Notorious real-world attacks include the 2024 Arup engineering firm heist where scammers used deepfake video and audio of a CFO to trick an employee into transferring $25.6 million.

- Beyond corporate theft, voice deepfakes pose severe emotional risks to families through kidnapping scams and can bypass traditional biometric security systems used by banks and other high-security apps.

- Organizations are forced to move past human verification alone by implementing multi-person approval protocols for large transfers and deploying AI voice detectors like TruthScan to analyze acoustic fingerprints in real-time.

- TruthScan secures enterprise interactions by identifying synthetic speech artifacts from platforms like ElevenLabs and Murf with over 99% accuracy across all major audio and video formats.

What Are Voice Deepfake Attacks?

A voice deepfake is a digital forgery of someone’s voice. The attacker uses software that can analyze every little detail about how someone speaks from a recording of their voice.

To clone the voice, the software tries to notice patterns in the voice. Mainly, things like the pitch, tone, the way someone breathes between sentences, etc, are taken into consideration by the software.

Once it learns these patterns, the attacker can make it say anything in that voice.

Never Worry About AI Fraud Again. TruthScan Can Help You:

- Detect AI generated images, text, voice, and video.

- Avoid major AI driven fraud.

- Protect your most sensitive enterprise assets.

What’s dangerous is that voice deepfake creating tools are easily accessible. And most of them don’t need any installation. They are available online in the form of websites.

You just need to throw a few dollars at them and start cloning voices. If you dig a little more, you might even find tools that let you clone voices for free.

This new and easily available tech has led to a steep rise in audio fraud.

Scammers only need a tiny bit of audio of someone, which they can source from social media or some other public channel, to clone it.

Then they can use the cloned voice to impersonate a person in situations like live phone calls, video meetings, voice notes, announcements, etc.

These aren’t just hypothetical use cases of voice deepfake attacks. These things have already happened. We’ll cover some of the notorious voice deepfake attacks later on.

Potential Risks of Voice Deepfake Attacks

Upon hearing about voice deepfake, you might have conjured images of a funny video of a celebrity saying things that they have never said.

But that seemingly innocuous use of cloned voice can cause serious damage to the parties involved. Let’s talk about the risks.

Financial Devastation for Businesses

A voice deepfake can ruin a company’s reputation forever. It also has the power to rob it of large sums of money in minutes.

How come, you may ask? Scammers can clone the voice of a company’s boss and use it to call employees and make them do things that aren’t supposed to be done, like moving money to an account.

This is just one example. The possibilities are endless.

Bypassing Security Systems

Your voice is a biometric that nobody else should have. That’s because you use it as a password to log in to many of your apps, especially the banking ones.

While these apps may have a built-in automated voice verification system to prevent unauthorized access, a good voice clone still has a chance to fool them.

The Emotional Toll on Families

Perhaps the easiest targets for audio fraudsters are elderly people in the family. Parents and grandparents, for example.

Scammers might call, let’s say, a mother and play the sound of her daughter crying for help, saying she has been kidnapped.

Eroding Trust in the Workplace

Voice deepfake attacks also hurt the trust in a healthy workplace.

Employees will have to double-check phone calls from their manager to make sure it’s not a scammer commanding them to do risky things in a cloned voice.

So then what are your options if not double-check manually? All risks we just discussed point to the need for an automated deepfake attack prevention.

Luckily, we have deepfake AI voice detector tools now, TruthScan being one of them.

Prevent fraud and impersonation with TruthScan’s AI Voice Detector.

Real-World Examples of Voice Deepfake Attacks

Thanks to AI, we have now reached a point where we cannot readily trust what our eyes see or ears hear. AI is now able to create realistic pictures and videos. The same goes for audio.

You might have already heard stories of people falling for deepfake audio fraud. It keeps popping up on the news and social media from time to time, too. Some of these are hard to wrap your head around.

Let’s tell you about some of the most notorious voice deepfake attacks so you can get a good idea of how cleverly these are executed.

The $25 Million Virtual Meeting That Never Actually Happened

This deepfake attack is probably the most reported one.

The victim was a UK engineering firm called Arup, but the scam originated in its Hong Kong office.

It was early 2024 when a worker in the finance department of the company got an email from the company’s CFO in the UK. In the email, the worker was being directed by the CFO to make secret money transfers to some bank accounts.

At first, the employee was actually quite suspicious because the request felt a bit odd, and they thought it might be a phishing scam.

But then the employee was invited to a video conference call. When the employee joined the call, they saw what seemed exactly like the CFO and several other senior colleagues.

Everyone looked and sounded exactly like they should. They were also talking to each other to make it seem more real.

This completely wiped away all the doubts of the finance department worker, who then sent over $25.6 million (~HK$200 million) to several different Hong Kong bank accounts via 15 transactions.

It was later found that this entire setup was a deepfake. No person in the call was who they looked like, except the Arup employee. The scammers had perfectly coordinated the attack using high-quality audio and video clones of the company’s CFO and senior employees.

The Italian Minister and the Fashion Icons

This one also took place in 2024. A fake Italian defence minister Guido Crosetto called several Italian elites and requested their immediate financial help in rescuing journalists who had been kidnapped.

In the call, the fake Guido Crosetto claimed this was a top-secret government operation. The caller even managed to get on the phone with legendary fashion figures like Giorgio Armani.

Sadly, one person ended up transferring about one million dollars. They probably had a stronger sense of patriotic duty to help out than others, which the scammers succeeded in exploiting.

The $243,000 UK Energy Heist

This happened in 2019 and was probably the first ever reported deepfake of its scale.

A CEO of a UK energy firm thought he was on the phone with his boss from the parent company in Germany.

The CEO was ordered to wire about €220,000 ($243,000) to a supplier in Hungary. He was told that it was a critical deal that needed immediate transfer. The CEO fell for the scam and transferred the money.

A Terrifying Call for a Mother in Arizona

Remember, we talked about audio deepfakes being a threat for families? The example of the scam we gave actually happened.

Scammers had cloned a 15-year-old girl’s voice from a video of hers on social media. They then called her mother and claimed in the daughter’s voice that she had been kidnapped and her kidnappers were demanding ransom money immediately.

Luckily, the father was able to call their actual daughter, who was safe at a ski practice during the whole ordeal. They dodged the scam.

How Voice Deepfakes Affect Enterprises

A voice deepfake attack’s main aim is mostly to steal money.

But when the target is enterprises, the damage is multidimensional.

For instance, deepfakes can poison the atmosphere between the employees of a company. It has the potential of creating a culture where employees are always terrified of making a blunder.

Take the example of call centers. We are seeing reports of cybercriminals calling them and pretending to be specific customers.

Their trick is to make the call center agents change account details of those customers (e.g. home addresses, phone numbers) and take over their accounts.

Remote hiring and virtual interviews are another area where deepfaked voices can be used by candidates to land jobs they aren’t qualified for.

Protecting Businesses From Voice Deepfake Attacks

The traditional security rules at businesses were written in the pre-AI era.

It’s high time that businesses need to reconsider them. They should first start with educating themselves about the many new cyberthreats that have stemmed from the abuse of AI.

For instance, they can organize training sessions for their entire team and show them real examples of audio fraud. You can start with the examples we just discussed above. Those training sessions should also give them deepfake attack prevention tips for different scenarios.

Then you can go ahead and update internal business policies. One important change can be to stop allowing a single person to authorize massive wire transfers all by themselves.

Big payments should require the approval of an entire panel so that at least one of them can notice in time that something is wrong.

You should also think of integrating an automated AI Voice Detector to run in the background during calls.

That’s what TruthScan AI tools were made for. You can detect, verify, and prevent voice deepfake attacks using our AI tools. Let’s tell you how.

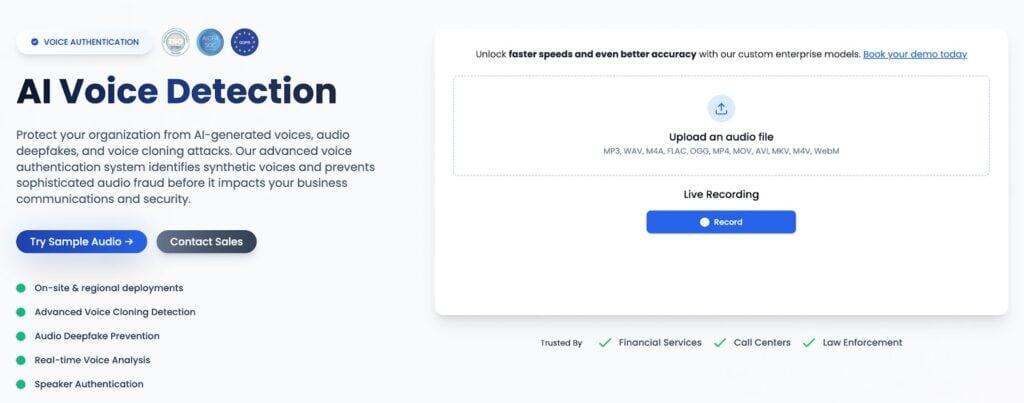

How TruthScan Secures Voice Interactions

We understand that employees cannot singlehandedly tell apart a deepfaked voice from a real voice in the middle of a stressful call. They need a dedicated assistant who could deal with that job during a call, while your team can focus on doing what they were hired for.

Our AI Voice Detector is a dedicated solution.

Here’s how it works and what it is capable of:

- Analyzes millions of different data points in live phone calls and video meetings and delivers 99% accuracy

- Issues a warning instantly after detecting a fake voice

- Catches spectral patterns and acoustic fingerprints in voice that are only left behind by synthetic models

- Integrates with your existing call center or help desk through a simple REST API

- Can scan all major audio and video formats

Talk to TruthScan About Preventing Voice Deepfake Attacks

You can stop voice cloning fraud in real-time with TruthScan, but the question is, when are you going to start? Your employees might sooner or later make a blunder and get you scammed. Don’t wait for that unfortunate moment.

Our AI voice detector can flag voice deepfakes using platforms like ElevenLabs and Murf.

It works in the background during live phone calls and video meetings, so your call agents don’t have to multitask.

All this needs is a quick integration through our REST API.

Get started with TruthScan now and protect your business from deepfake scams.