Your support agents are trained to be the most helpful people in your company.

They are the frontline of your brand’s reputation, working hard to make sure every interaction ends with a smile.

But do you know the most dangerous irony of 2026?

This very helpfulness makes them your biggest security liability.

Your agents are focused on customer satisfaction.

They don’t always notice a slight robotic tone in a caller’s voice or a tiny blur around a customer’s jawline during a video call.

This gives customer fraud the perfect window to strike.

If your support desk isn’t backed by automated fraud detection, you might be already exposed.

In this blog, we are going to cover what is deepfake impersonation, the techniques used by attackers to bypass your defenses, the early indicators that can tip off your team, and how to leverage a real-time AI detector to protect your business.

Lets dive in.

Det viktigste å ta med seg

- Support agents are trained to be helpful, making them the primary targets for AI impersonation detection bypasses.

- Modern fraudsters use a real-time AI detector to identify voice clones that sync in under 50 milliseconds.

- Automated fraud detection must now cover manipulated screen recordings, fake receipts, and AI-generated IDs.

- Effective deepfake prevention requires scanning audio, video, and images simultaneously.

- Integrating a deepfake detector into e-discovery and support workflows reduces financial loss from BEC (Business Email Compromise) by up to 80%.

What are Deepfake Impersonations?

The word “deepfake” comes from deep learning (a type of AI) and fake.

Impersonation means pretending to be someone else, usually to gain trust, steal money, or access private information.

Deepfake impersonations happen when AI copies a real person’s face, voice, or behavior to create fake content designed to deceive others.

Aldri bekymre deg for AI-svindel igjen. TruthScan Kan hjelpe deg:

- Oppdage AI generert bilder, tekst, tale og video.

- Unngå stor AI-drevet svindel.

- Beskytt dine mest følsom virksomhetens eiendeler.

Deepfakes are no longer limited to simple face swaps. In 2026, we’re seeing behavioral deepfakes that can copy:

- Speech patterns

- Tone and pauses

- Facial micro-expressions

- Even personality nuances

This makes it much harder to detect manipulation with the naked eye.

Real-World Example: WPP CEO Impersonation (May 2024)

Cybercriminals used a publicly available photo of WPP CEO Mark Read to create a fake WhatsApp account.

They lured an executive into a Microsoft Teams meeting, using a real-time AI detector-defying voice clone to authorize a fraudulent wire transfer.

Fortunately, an alert employee became suspicious and reported the incident, preventing financial loss.

Why Customer Support Is Vulnerable

The risk of customer fraud started rising in 2023–24 when generative AI tools became widely available.

But by 2025–26, the threat reached industrial scale.

What once required technical skill can now be done with simple tools and a few minutes of audio.

Customer support teams didn’t suddenly become careless. They became exposed.

Support agents are trained to help people, not question them, which is why deepfake prevention is so difficult to manage manually. Attackers rely on the agent’s instinct to trust and move fast.

Most contact centers still verify identity using simple questions: account numbers, dates of birth, last four digits of an ID. The problem is, that information isn’t private anymore.

Data breaches and social media make it easy to bypass traditional checks, making AI impersonation detection a mandatory layer of defense. In 2025, at a Federal Reserve event, Sam Altman, CEO of OpenAI, even said it’s crazy to rely on voiceprint authentication alone.

There’s also the pressure of volume. Many agents handle 80 to 120 interactions a day. When calls are back-to-back, there’s no time to analyze subtle voice changes.

This is where automated fraud detection becomes a lifesaver. Without a real-time AI detector working in the background, agents are forced to respond to urgency and emotion.

Techniques Used by Deepfake Impersonators

- Voice cloning in calls

By 2026, tools like ElevenLabs, Speechify, and Murf can create convincing clones from under 10 seconds of audio.

Even more concerning, real-time voice conversion can transform a caller’s voice into someone else’s in under 50 milliseconds.

To the victim, it sounds live, natural, and familiar.

There’s rarely an obvious robotic clue anymore. Without support fraud protection software, agents won’t catch the subtle artifacts like metallic undertones or unnatural breathing patterns that indicate customer fraud.

Eksempel:

- Wiz (Late 2024): Attackers cloned CEO Assaf Rappaport’s voice to solicit credentials. While this attempt failed due to a tone mismatch, it highlighted the urgent need for AI impersonation detection in corporate communications.

- LastPass (Early 2024): An employee was targeted by a deepfake CEO voice trained on YouTube videos. This incident proved that high-profile individuals are at constant risk, requiring automated fraud detection to safeguard internal support channels.

- Face-swapped agent videos

Face-swap deepfakes use advanced AI models to replace one person’s face with another in a live video.

By 2026, tools like DeepFaceLive and Deep-Live-Cam can run in real time with under 50 milliseconds of delay.

Fraudsters can stream this altered video through platforms like Zoom, Microsoft Teams, or Google Meet using virtual camera tools such as OBS Studio. To the person on the call, everything looks normal.

Eksempel:

KnowBe4 Fake Employee Case (July 2024): A North Korean state actor used AI face-swap technology to pass live video interviews with a stolen U.S. identity. He was caught only after internal security flagged unusual device activity.

- Manipulated screen recordings

Attackers are increasingly using AI to fabricate or alter screen recordings, making it look like a refund, transaction, or support ticket action happened.

These fake recordings can manipulate support agents, override disputes, or escalate chargebacks.

AI can now tweak timestamps, account balances, and dynamic UI elements so convincingly that casual inspection won’t catch it.

Fraudsters can even inject pre-recorded or manipulated videos into live support calls via virtual camera plugins.

Early Indicators of Deepfake Threats

Modern deepfakes are convincing. Effective deepfake prevention involves looking for these “tells”:

Audio-Level Tells:

- Cloned voices sound flat and lack natural pitch changes.

- AI speech often misses normal breathing and filler sounds.

- The voice may not match background noise.

- Real-time voice conversion can cause small delays (200–400ms) on sudden questions.

- Voices from public-speaking recordings may sound too formal for casual conversation.

Video-Level Tells:

- Hair, ears, and jaw edges may look slightly blurred.

- Blinking happens at very regular intervals.

- Eye gaze may not follow the camera naturally.

- Lighting on the face may not match the room.

- Micro-expressions are missing or very smooth.

- Poor connection excuses can hide video artifacts caused by compression.

Behavioral / Interaction-Level Tells:

- Deepfake operators avoid unexpected verification requests.

- They may contact you first on informal apps before using official channels.

- Urgency and secrecy together are a warning sign.

- They resist out-of-band checks, like calling a known number.

Leveraging AI Detection Tools

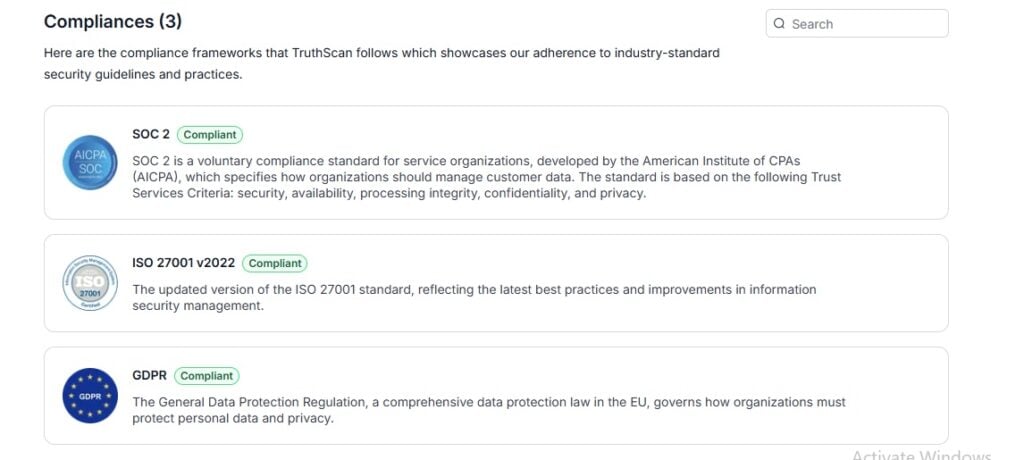

TruthScan is a powerful platform designed for automated fraud detection, trusted by over 250 million users.

It’s fully ISO 27001, SOC 2 certified, and GDPR compliant, and works seamlessly with tools like Salesforce, Microsoft 365, Google Workspace, SAP, and Zoom.

- Deepfake Detector

This deepfake detector identifies AI-generated videos, face swaps, and manipulated media across all major formats (MP4, AVI, MOV, MKV, WebM) up to 4K.

Here’s how it works:

- Tracks facial movements, blinking patterns, and microexpressions.

- Spots pixel-level inconsistencies, lighting mismatches, and frame-to-frame artifacts.

- Detects GAN-based generation models like AnimateDiff, D-ID, HeyGen, Runway Gen-4, Stable Video Diffusion, and more.

Upload a known real video and a deepfake (HeyGen/D-ID) at Truthscan.com/deepfake-detector.

- AI-bildedetektor

The TruthScan AI image detector flags AI-generated or manipulated images from platforms like Midjourney, DALL-E, Stable Diffusion, Canva AI, Grok Imagine, StyleGAN, and ThisPersonDoesNotExist.

Here’s how it works:

- Flags background swaps, object removal, and lighting edits.

- Generates confidence scores and pixel-level insights without storing files.

Upload real vs. AI-generated images at Truthscan.com/ai-image-detector to compare confidence scores.

Securing Support Channels Effectively

In 2026, customer support teams are under constant threat from AI-driven fraud.

Attackers can fake voices, swap faces in live video, or submit manipulated files, making it nearly impossible for human agents to tell what’s real and what’s not.

That’s why securing support channels is essential.

- Real-time AI Detector

The Real-Time AI Detector is designed to catch AI-generated content before it ever reaches your agents or customers.

Unlike file-based tools that analyze uploads, this platform monitors live interactions across email, chat, phone, and video channels.

How it helps your team:

- Detects AI impersonation detection attempts in real-time.

- Flag AI-generated messages or calls that try to trick agents into giving access or processing requests.

- Works with Salesforce, Teams, Gmail, Zoom, and more to provide automated fraud detection without slowing down operations.

- Handles millions of interactions across all channels without slowing down operations.

- SOC 2, ISO 27001, and GDPR certifications mean every action is auditable and court-ready.

Policies and Training for Teams

- Standard operating procedures

| Policy Name | Policy |

| Flerfaktorverifisering | Require MFA for account resets, high-value transactions, and security changes. |

| Channel Verification | Redirect all requests from informal channels (WhatsApp, Telegram, personal email) to official support systems. |

| Contact Logging | Agents must log unusual contacts including channel, time, and request nature. |

| Safe Word System | Use pre-registered verification phrases for high-value accounts. |

| Out-of-Band Verification | Any internal escalation or executive request must be verified via callback to official directory numbers. |

| No Solo Approval | Never authorize transactions or security changes based solely on video calls or emails. |

| Voice Confirmation | Require voice confirmation for all fund transfers above a defined threshold. |

| Media Verification | Scan all photos, videos, and screen recordings through TruthScan detectors before human review. |

| Metadata Checks | Use an AI image detector to flag inconsistent EXIF or timestamps. |

| Secondary Review | High-value claim submissions must undergo a secondary review before processing. |

- Staff fraud awareness training

Employees need to experience realistic deepfakes. Research shows that detection rates jump from 34% to 74% after about a dozen live simulations involving an AI video detector.

Red flags to watch for:

- Urgency paired with secrecy

- Requests outside normal channels

- Resistance to callback or secondary verification

- Bypassing standard processes

- Emotional pressure (fear, guilt, authority)

- Routine security reviews

In 2026, even the best SOPs require regular auditing through automated fraud detection protocols:

| Policy Name | Policy |

| Quarterly Threat Assessment | Check for new deepfake tools, validate detection thresholds, and review near-miss incidents. |

| Channel Security Audit | Test each support channel for weak spots; attackers target the path of least resistance. |

| Detection Tool Validation | Periodically run known fakes through TruthScan to ensure detection accuracy keeps pace with evolving AI methods. |

| Incident Response Drills | Conduct tabletop exercises simulating deepfake impersonations to test notifications, lockdowns, and response speed. |

| Vendor & Third-Party Checks | Verify that external communications from partners or customers follow the same strong authentication standards as internal channels. |

How TruthScan Strengthens Customer Support Security

TruthScan is built to stop AI fraud at every level of customer support. It explains why something is suspicious, so teams can act confidently.

| Tool | Purpose & Benefit |

| AI Voice Detector | Spots cloned voices in calls and recordings, protecting live conversations from impersonation. |

| Deepfake Detector | Analyzes videos and images to catch face swaps, synthetic personas, and manipulated media. |

| AI-bildedetektor | Flags AI-generated profile pics, fake IDs, and manipulated screenshots before any decision is made. |

| AI-detektor i sanntid | Continuously monitors live chat, email, and video with sub-second automated fraud detection alerts. |

| Detektor for falske kvitteringer | Detects AI-generated receipts or proof documents used in fraudulent claims. |

| Email Scam Detector | Catches AI-generated phishing attempts and BEC attacks before they reach the inbox. |

How it fits a customer support security stack:

- Inbound media (photos, videos, recordings) → Deepfake Detector + AI Image Detector before human review

- Live support calls → AI Voice Detector analyzing call recordings or live streams

- Video support sessions → Deepfake Detector in real-time or post-call

- Email and chat channels → Real-Time AI Detector for continuous monitoring

- Document submissions (IDs, receipts, screenshots) → Fake Receipt Detector + AI Image Detector

Talk to TruthScan About Deepfake Protection in Support

Don’t wait until it’s too late. TruthScan helps your team in detecting AI content before it reaches your agents.

Choose how to get started:

- Test It Yourself: Try all TruthScan detection tools with 20,000 free credits. No payment required. → Start your free trial at Truthscan.com

- Enterprise Demo: Let our team show you a tailored deployment for Salesforce, Microsoft 365, Zoom, and Google Workspace. → Book a demo at truthscan.com/contact

Secure your support channels today, because seeing is no longer believing; verifying is.