For decades, we used CAPTCHAs to prove to computers that we were human.

Now, the tables have turned. AI is passing security checks to prove to us that it is human. The lines have blurred entirely.

A decent cup of coffee costs $6.

A flawless, AI-generated driver’s license that can completely bypass your company’s legacy security system costs just $15.

It’s an era of cheap, scalable identity fraud, where fraudsters don’t need coding skills, they just need a credit card.

If you’re still relying on basic automated identity verification, you are already a target.

In this blog, we’ll cover the top 5 ways these synthetic fakes are slipping right through your front door.

Let’s get into it.

Key Takeaways

- People can only spot high-quality deepfakes about 24.5% of the time, so AI detection tools are now essential.

- Basic ID checks are easily bypassed by injected video feeds and AI-enhanced images.

- Strong AI tools are required to catch fake faces and synthetic images that look real to humans.

- Autonomous AI agents can now run fraud attempts and improve themselves in real time.

- Simple tricks like surprise actions or zooming in can sometimes reveal hidden flaws.

- TruthScan detects advanced AI fraud with over 99% accuracy in under 500 milliseconds.

What are AI-Generated Identity Images?

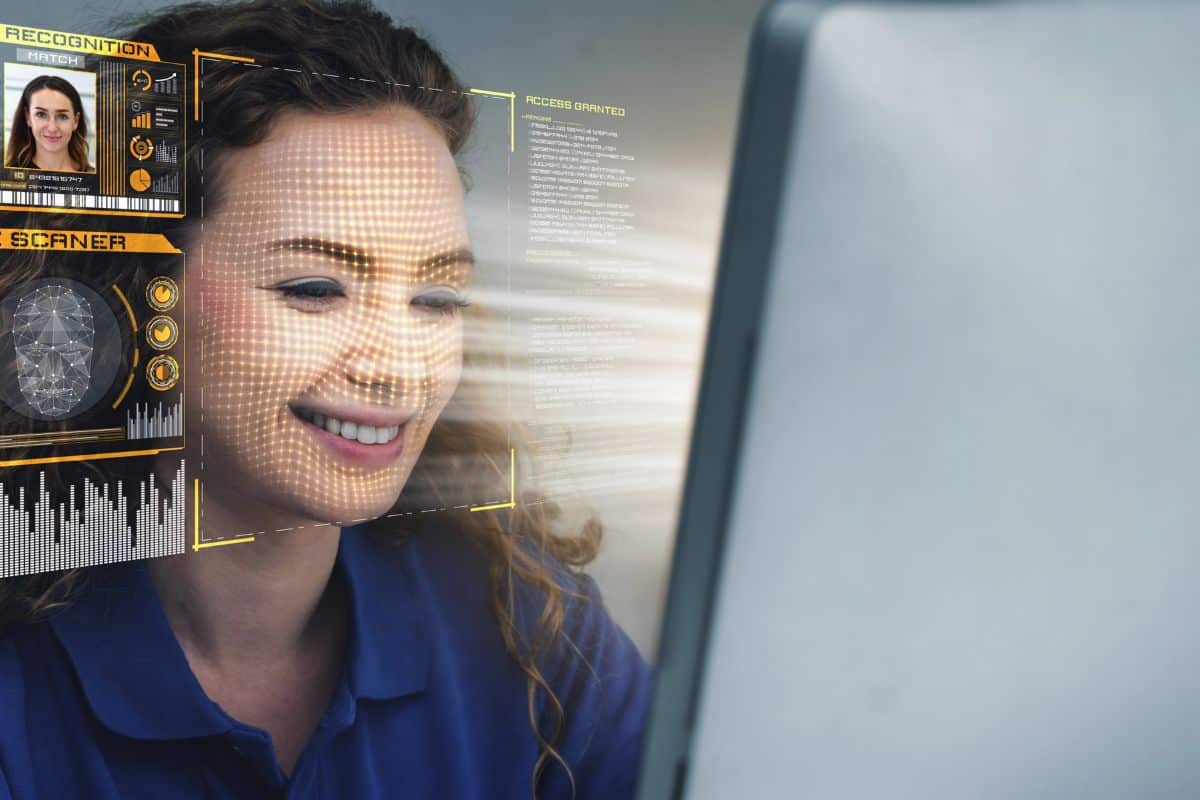

AI-generated identity images are machine-made photos of faces or ID documents that look 100% authentic but do not belong to any real person or physical record.

These are the primary tools used in modern AI identity fraud.

We’re seeing the rise of fake identities because of the three main reasons:

Never Worry About AI Fraud Again. TruthScan Can Help You:

- Detect AI generated images, text, voice, and video.

- Avoid major AI driven fraud.

- Protect your most sensitive enterprise assets.

- Collapse of Cost and Skill: Today, you just need a prompt to bypass fake ID detection.

- Sites like OnlyFake: Offering high-quality AI driver’s licenses for $15.

3. Weakness of Digital Onboarding: Most companies rely on uploads, making automated identity verification a prime target for synthetic fakes.

Weaknesses in Traditional Verification Systems

Traditional systems were not built for AI identity fraud that mimics reality with high precision.

Here is why they fail against AI-generated fraud:

- Human reviewers check for obvious edits, but AI-generated IDs contain flaws at a pixel level that are impossible to detect with the naked eye. Without a dedicated AI image detector, these microscopic errors go unnoticed.

- Systems validate formats and data rules, but fake ID detection has become harder because AI can now generate barcodes and text that align perfectly with fake identity details.

- Facial recognition compares ID photos to selfies, but fraudsters use AI to create entirely fake identities that match across both, tricking standard automated identity verification flows.

- Basic motion and animation checks are defeated by real-time deepfake tools that alter faces and voices during verification.

- Fraudsters know verification checklists and train AI to meet only those exact criteria, ensuring easy approval.

In 2026, looking real is no longer proof of being real. Traditional systems that rely on visual checks or basic data rules are essentially open doors for AI identity fraud.

Advanced Techniques Used by AI-Generated Images

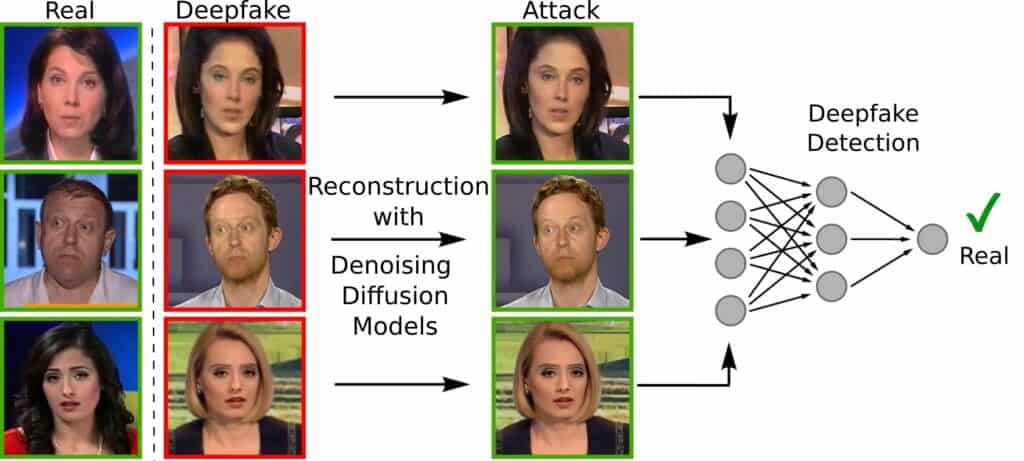

- Deepfake facial generation

Deepfakes use AI to create completely new, realistic human faces, or to place one person’s face onto another’s body so seamlessly it looks real.

Here’s how they work:

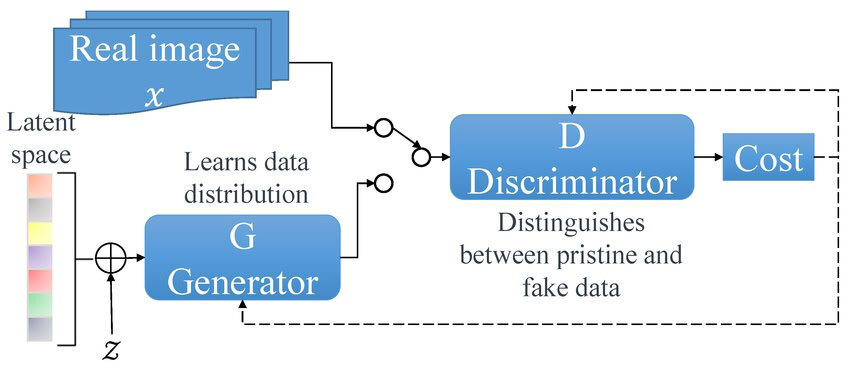

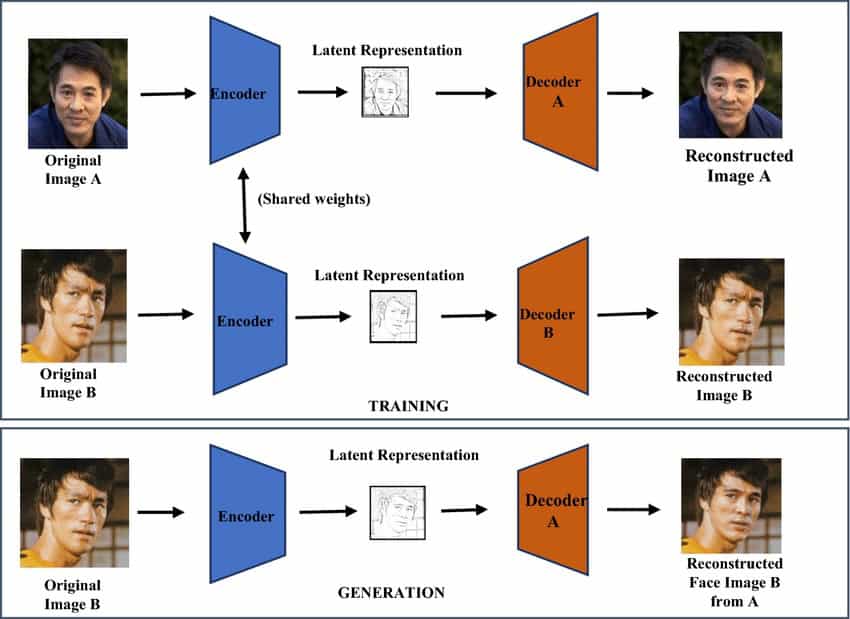

- In Generative Adversarial Networks (GANs), two AI models are involved. One creates fake faces, the other tries to detect them, until the results become indistinguishable from real images.

2. The diffusion models start with random noise and gradually turn it into a detailed image based on instructions to produce more realistic, high-resolution results than GANs.

3. This encoder-decoder method captures the expressions of one face, then rebuilds it onto another face.

Deepfake attacks against identity verification (IDV) systems surged 3000% in 2023.

But studies show that humans can only identify high-quality deepfake videos 24.5% of the time. In other words, you have a better chance of winning a coin toss than spotting a deepfake with your own eyes.

In this case, you need a detector that’s as advanced as these deepfakes generators.

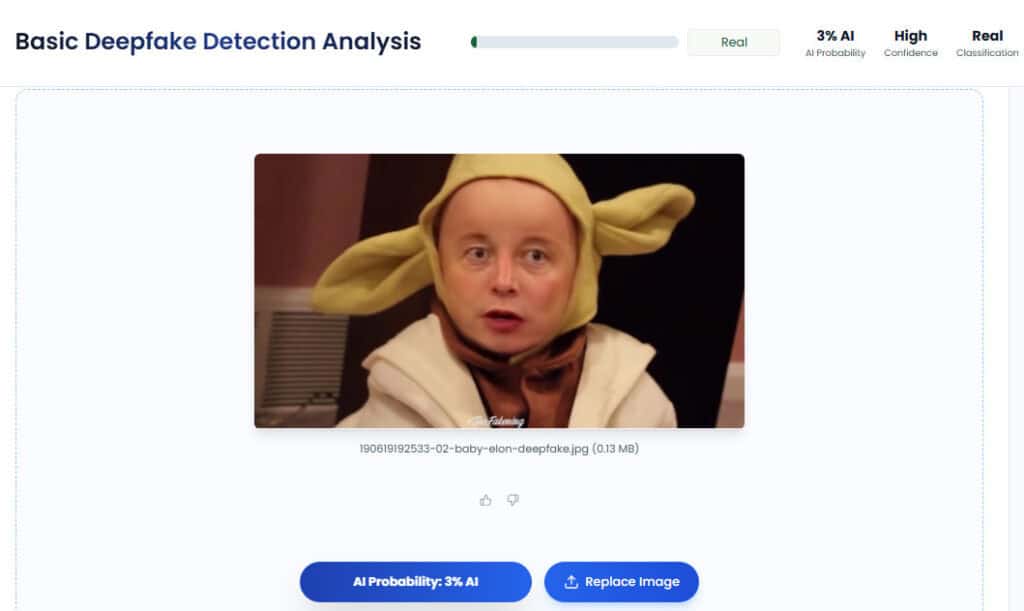

TruthScan’s Deepfake Detector is built to catch the hidden mathematical structures left behind by StyleGAN, Diffusion models, and ThisPersonDoesNotExist portrait tools. Detect synthetic identity photos with TruthScan’s Deepfake Detector.

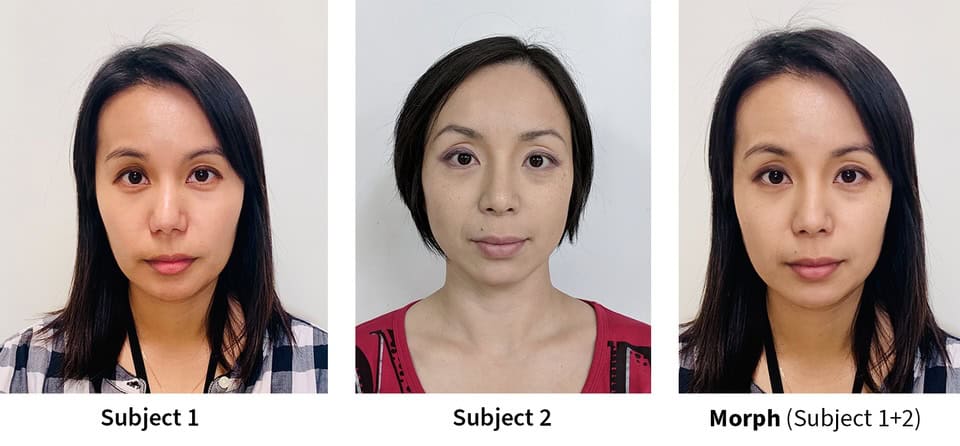

- Morphing and synthesis

Morphing blends the facial features of two real people into a single photo. This photo looks enough like Person A and Person B to successfully authenticate as either one, bypassing many fake ID detection protocols.

- Older systems map facial features (eyes, nose, mouth) from two faces and blend them into one combined image.

- New AI models create morphs without obvious traces, making them hard for both humans and systems to detect.

- Fraudsters mix real personal data (like name and DOB) with a morphed photo, creating identities that look believable but aren’t fully real.

In synthesis, fraudsters build a new person by combining real stolen data with AI-generated details.

They use a real SSN with a fake face, ensuring automated identity verification validates the data while the AI handles visual checks.

- AI-enhanced resolution

AI-enhanced resolution means using super-resolution algorithms to take blurry, stolen, or low-quality images and upscale them into sharp, high-fidelity photos that appear 100% authentic, often fooling a basic AI image detector.

Unlike traditional zooming, AI enhancement invents missing details based on its training.

- Tools like Real-ESRGAN and GFPGAN are trained on millions of image pairs, allowing them to add fine details like skin texture, lighting, and sharp facial features.

- This means a rough or AI-generated face can be upgraded into a clean, ID-quality portrait.

- The same applies to documents. AI can sharpen text, enhance holograms, and even simulate the texture of a physical card.

Common Slip-Through Scenarios

Here are the 3 most common ways AI-generated identities are currently beating automated identity verification systems in 2026.

Scenario 1: Upload-Only KYC at Crypto Exchanges & Fintech Platforms

Many crypto and fintech apps let you upload a saved photo of your ID instead of taking a live picture. This is a massive open door for identity frauds. There is no live check.

A fraudster can spend $15 on a site like OnlyFake to bypass fake ID detection by uploading a high-quality digital driver’s license.

Scenario 2: Camera Injection Attacks

Instead of pointing a phone at their face, the hacker uses software to plug a pre-made deepfake video directly into the app’s data stream. The app thinks it’s seeing a live person through a lens, but it’s actually playing a digital movie.

Scenario 3: Paired Synthetic Attacks

Systems that compare your ID photo to your selfie are easily fooled by AI identity fraud. A fraudster creates a brand-new AI face, puts that face on a fake ID, and uses it to create a matching selfie video.

Since the computer sees that the two faces match, it grants access, even though neither the person nor the ID exists in the real world.

Tools and Methods to Detect AI-Generated IDs

To stay safe from identity fraud, businesses must use a specialized AI image detector alongside smart manual tricks. For example:

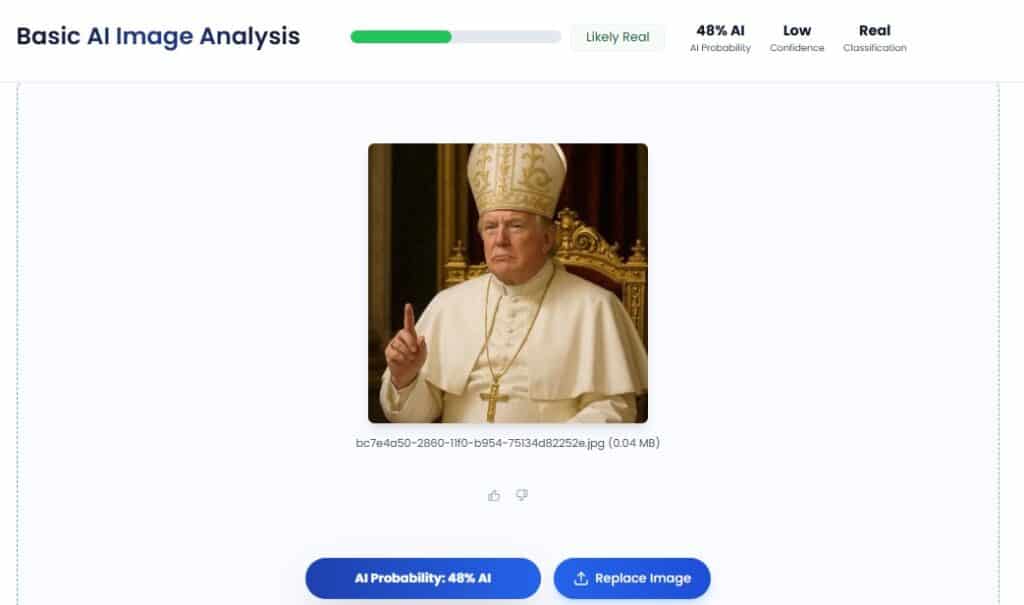

Tool: TruthScan (Best for Fast, All-in-One Checks)

TruthScan is the go-to platform for companies that need to scale their automated identity verification quickly and securely.

| AI Image Detector | Deepfake Detector |

| Identifies static images created by AI (DALL-E, Midjourney, Stable Diffusion) | Detects video manipulations, face swaps, and synthetic motion |

| Catching StyleGAN faces that look real but are AI identity fraud | Catching artifacts from live software and high-stakes document forgery |

| Scans IDs and selfies in under 500ms (half a second) | Provides real-time analysis for live AI verification streams |

| First to detect the ultra-realistic Nano Banana 2.5 model in late 2025 | Successfully identified fraudulent government employee IDs in a 2025 forensic test |

Check identity images in seconds with TruthScan’s AI Image & Deepfake Detectors.

3 Ways To Manually Check AI-Generated IDs

Note: Smart AI can beat these tricks, so always use TruthScan’s fake ID detection software alongside them.

Method 1: The Surprise Test

During a live video call, ask the person to wave an object in front of their face. Most deepfakes will flicker, allowing your internal AI verification team to spot the glitch.

Method 2: Looking at the Metadata

AI-generated images often have blank metadata. If the file info doesn’t match a real camera device, it’s a red flag for identity fraud.

Method 3: The 400% Zoom

Zoom in closely on holograms. AI often struggles with tiny details, making it easier for manual fake ID detection if you know where to look for blurry patterns.

Quick Comparison: Tools vs. Humans

| Feature | TruthScan | Human Review |

| Speed | Instant (under 1 second) | 5–10 minutes |

| Accuracy | 99%+ (reliable AI verification) | Low (we make mistakes) |

| Deepfakes | Can spot hidden AI math | Very hard to see |

Evolving Threats and Solutions

Here is the breakdown of the most dangerous emerging threats in identity fraud and the high-tech solutions fighting back.

- AI Fraud Agents

Fraud is automated end-to-end. AI fraud agents can generate fake IDs, submit them, interact with verification systems, and learn from failures to improve future attempts.

As a result, fraud is becoming faster, smarter, and scalable. Organized fraud networks are expected to make these agents mainstream within the next 18 months (Sumsub 2025-2026 Report).

- Real-Time Deepfakes at Scale

Tools like DeepFaceLive have made deepfakes fast enough for live conversation.

Deepfakes can now convincingly smile, nod, or blink on command. This makes passive liveness checks (just watching for movement) completely insufficient for high-security verification.

- Fraud-as-a-Service Marketplaces

You no longer need to be a tech genius to commit identity fraud. Underground Telegram and Dark Web shops now sell Complete ID Fraud Kits.

Deepfake fraud in identity verification (IDV) surged 704% recently, with 88% of all cases targeting cryptocurrency exchanges.

To survive in 2026, verification systems are moving toward provenance (checking where a file came from) rather than just analysis (checking what a file looks like).

- Injection Attack Detection (IAD): New standards (ISO 25456) ensure AI verification systems can detect whether video input is from a real camera or injected by fraud software.

- Cryptographic Metadata (C2PA): Companies like Google, Microsoft, and Adobe embed secure digital signatures in images to verify their source, time, and device.

- Invisible Watermarking (SynthID): An AI image detector can find these hidden marks even after the photo has been edited.

- NFC Chip Verification: Validating the encrypted chip inside e-passports, which is the gold standard for fake ID detection.

- Multi-Modal Layering: The most effective defense combines document checks, device data, and user behavior into one layered system.

Best Practices to Prevent Verification Fraud

Here are the 7 industry best practices used by top-tier firms to stay ahead of synthetic fraud:

| Best Practice | Strategy | Importance |

| Multi-Layer Verification | Use multiple checks: ID scan + face match + device + behavior | One check can fail. Multiple layers make fraud much harder |

| Active Liveness Checks | Ask users to do random actions (not just blink/smile) | Stops deepfakes that replay or mimic basic movements |

| Injection Attack Detection (IAD) | Monitor if fake video/data is directly fed into the system | Catches fraud that bypasses the camera completely |

| AI Document Forensics | Use AI to analyze image details, not just read text | Detects hidden flaws in fake IDs that humans can’t see |

| Cross-Database Validation | Match ID details with official government records | Even perfect-looking IDs fail if the person doesn’t exist |

| Post-Onboarding Monitoring | Track behavior after signup (transactions, device changes) | Most fraud happens after account approval |

| Staff Training & Response | Train teams to spot fraud and handle attacks quickly | Human awareness reduces scams and deepfake-based attacks |

How TruthScan Secures Identity Verification

With AI identity fraud losses hitting $200M in Q1 2025 alone, enterprises can no longer rely on manual checks or basic AI verification tools.

Here is how TruthScan is securing the future of identity verification.

- Protecting over 250 million users worldwide (2025-2026).

- Delivers 99%+ detection accuracy on custom enterprise models.

- Real-time results in under 500ms for enterprise deployments.

- A sub-organization of Undetectable AI (20M+ users), led by CEO Christian Perry.

- SOC 2 Type II, ISO 27001, and GDPR compliant.

- Featured in Forbes, CBS, and Business Insider.

TruthScan provides a multimodal shield that covers text, images, audio, video, and documents on a single platform.

- AI Image Detector

This tool identifies images created by DALL-E, Midjourney, and Stable Diffusion. It is specifically trained to catch the faces that don’t exist, like those from StyleGAN and ThisPersonDoesNotExist.

You don’t just get a “Yes/No” answer. You get a confidence score and a visual heatmap showing exactly which parts of the image were manipulated by AI.

2. Deepfake Detector

TruthScan uses computer vision to identify face swaps and manipulated videos up to 4K resolution.

In October 2025, the Genians Security Center used TruthScan to successfully analyze a fraudulent government ID card, proving its reliability in high-stakes forensic research.

It detects both pre-recorded deepfakes and artifacts from live face-swap tools used during video calls.

- Real-Time Fraud Prevention

Instead of checking IDs after the damage is done, TruthScan analyzes content at the point of submission.

- The system can automatically quarantine, flag, or block AI-generated content based on your company’s specific risk thresholds.

Fraudsters move fast, but TruthScan moves faster. The platform updates its models to cover new AI tools before they become common.

In December 2025, TruthScan released a targeted update for Google’s Nano Banana 2.5 model, which was tested as the hardest AI image to detect at the time.

Prevent AI-generated ID fraud in real time with TruthScan.

Talk to TruthScan About Preventing Identity Fraud

The era of eye-balling security is over. In a world where AI identity fraud is indistinguishable from the real thing, you need a defense system that evolves as fast as the threats do.

Prevent AI-generated ID fraud in real time with TruthScan.

- Use the AI Image Detector to catch synthetic faces and ThisPersonDoesNotExist portraits.

- Deploy the Deepfake Detector to identify real-time face swaps and 4K video injections.

- Integrate our Enterprise API to process millions of IDs in under 500ms.

Start Your Free TruthScan Trial Today