In the 90s, it was DNA.

In the 2000s, it was cell tower pings.

But in 2026, the new gold standard of evidence is the digital fingerprint.

Think of it this way…

Every video file has a unique signature.

If even a single pixel is altered by AI, that fingerprint changes instantly.

If your AI evidence doesn’t have a verified blockchain timestamp to lock that fingerprint in place, it might as well be a cartoon in the eyes of a modern judge.

So this means that the era of “seeing is believing” is over.

We have entered the era of AI video verification, where proof isn’t found in what you see, but in the digital code hidden beneath the surface.

In this blog, we’ll explore why traditional evidence is failing against video fraud, how to spot manipulated clips, why verification is critical for admissibility, and why an AI video detector like TruthScan is now essential for legal teams.

Let’s dive in.

Hal-hal Penting yang Dapat Dipetik

- In 2026, all video evidence must undergo AI video verification to be considered reliable.

- Video fraud includes small edits like timestamp manipulation, not just full face-swaps.

- A professional deepfake detector is the only way to catch GAN artifacts that humans miss.

- New rules like Federal Rule 707 are standardizing how AI evidence is admitted in court.

- Automated evidence analysis allows firms to process massive discovery packages quickly and accurately.

- TruthScan prevents fraud during live hearings by detecting synthetic masks in real-time.

What is Video Evidence in Legal Disputes?

Video evidence is basically any recorded clip used in court to prove what happened. From criminal cases to insurance settlements, it is everywhere.

However, in 2026, the rise of video fraud has made “seeing is believing” a dangerous assumption. Legal professionals now require robust AI video verification to ensure the integrity of the justice system.

Types of Video Evidence Courts Use Every Day

Jangan Pernah Khawatir Tentang Penipuan AI Lagi. TruthScan Dapat Membantu Anda:

- Mendeteksi AI yang dihasilkan gambar, teks, suara, dan video.

- Hindari penipuan besar yang digerakkan oleh AI.

- Lindungi sebagian besar sensitif aset perusahaan.

- Surveillance (CCTV): Cameras from stores or traffic lights. This is the most common proof in criminal cases.

- Body Cams: Footage from police officers, used mostly in civil rights or excessive force cases.

- Dashcams: The go-to evidence for car accidents and insurance fights.

- Recorded Testimonies: Video depositions or remote witness statements that have become normal since the pandemic. Now a standard, but prone to legal fraud detection challenges.

- Smartphone & Social Media: Videos from bystanders or posts that prove someone’s behavior or location.

- Company Security: Footage used by corporations for fraud or “wrongful firing” cases.

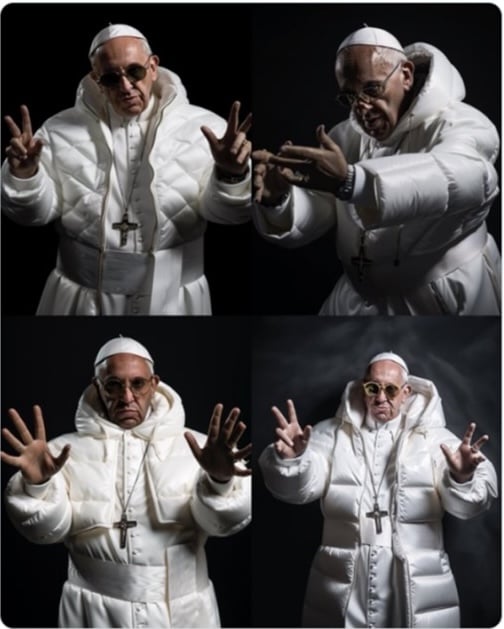

Generative AI and deepfake technology have added a new layer of risk. In 2025:

- 1 in 20 identity verifications were fooled by AI deepfakes

- A Medius survey reported that 43% of professionals had fallen victim to a deepfake fraud attempt

Real-World Cases of Video Evidence Fraud

- Manipulated courtroom clips

Manipulated courtroom clips refer to any video or audio evidence submitted in a legal case that has been altered, edited, or completely fabricated using technology.

Examples:

- Alameda county deepfake case (2025): A California civil case was thrown out after the court discovered that a witness video submitted as evidence had been completely created using AI.

- The Sz Huang v. Tesla deepfake defense (2023): In a wrongful death lawsuit, Tesla’s lawyers claimed that key crash footage might be a deepfake.

Altered surveillance videos

Surveillance video is one of the most common types of AI evidence used in court. However, many people mistakenly think of manipulation as only full deepfakes.

In reality, video fraud often happens in smaller, less obvious ways that can compromise a case.

Common types of manipulation include:

- Timestamp changes

- Frame removal

- Metadata or GPS changes

- Face replacement

- Object removal or insertion

- Looping footage

- Quality degradation

Surveillance video often comes from many different places like private stores, home doorbells, parking lots, and city cameras. Unlike police body camera footage, these systems usually don’t follow strict chain-of-custody rules.

That means:

- There may be no clear record of who handled the file.

- The footage may be copied, transferred, or converted multiple times.

- Authentication procedures may be weak or inconsistent.

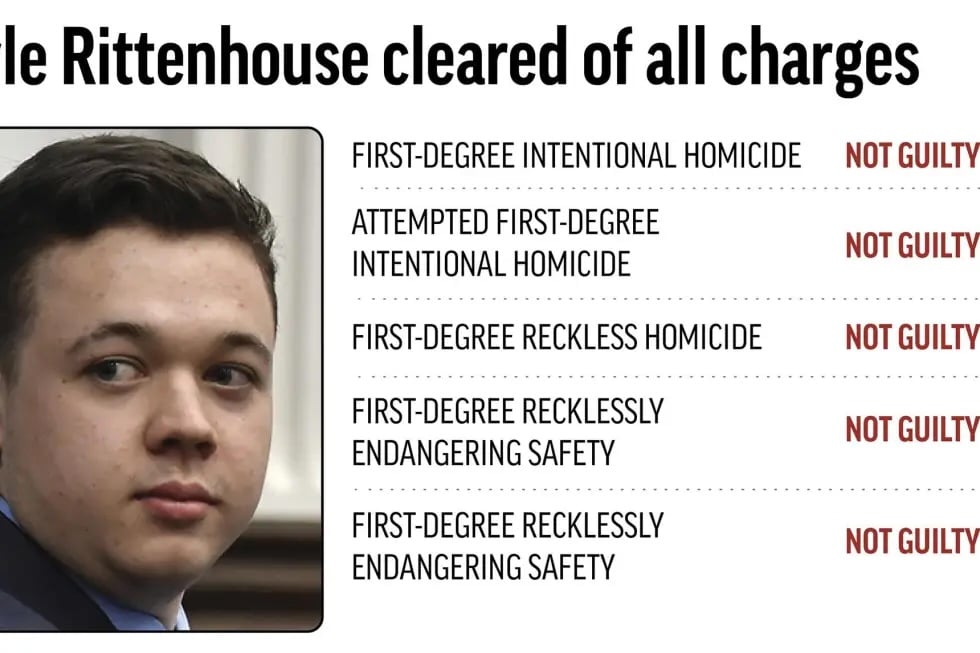

Contoh: The Wisconsin v. Rittenhouse AI Enhancement Issue

A famous example came from the Wisconsin v. Rittenhouse trial. The prosecution tried to use an iPad’s pinch-to-zoom feature to show detail in a drone video.

The defense argued that Apple’s zoom uses AI interpolation to guess what should be there. The judge ruled that without a professional AI video detector, the enhanced footage could not be admitted.

Disputed video testimonies

A disputed video testimony usually requires legal fraud detection to determine if a recording is:

- A fully fabricated deposition or statement

- A real testimony challenged as fake

- A manipulated real recording

Each scenario creates a serious authentication burden for the court.

Contoh: The UK Child Custody Case

In a UK family court dispute cited by the University of Baltimore Law Review, a mother submitted a heavily altered recording to portray the father as violent during a custody battle.

The goal was to restrict his access to his children.

Rise of AI-Generated Video in Legal Matters

The journey toward the current deepfake detector era emerged in distinct phases:

- The Early Warning Years (2017–2021)

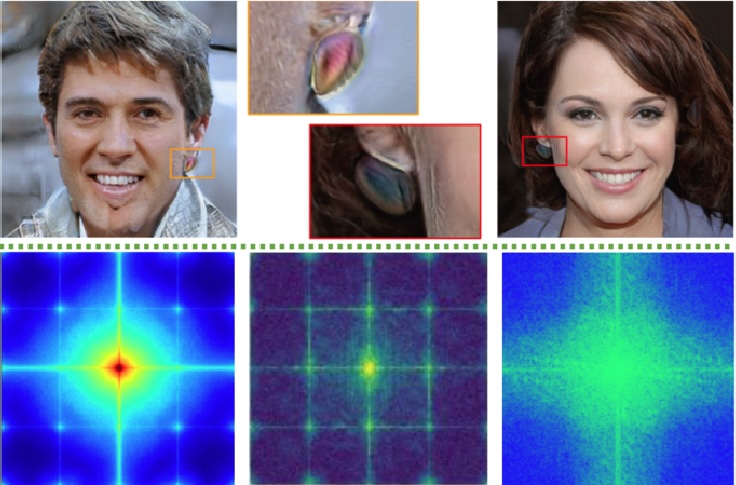

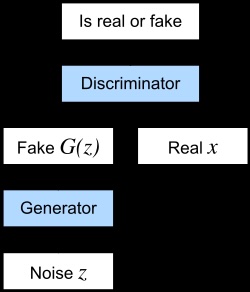

Deepfake technology (powered by generative adversarial networks (GANs)) entered the public eye around 2017.

Early deepfakes were often low-quality: faces with weird distortions, extra fingers, mismatched lighting, and blurry features that made them easy to spot.

- The Escalation Phase (2022–2023)

By 2022, the technology improved. Many were free and usable on a smartphone.

In 2023, we began seeing some of the first significant deepfake-related challenges in court cases, including Sz Huang v. Tesla, United States v. Reffitt, and United States v. Doolin, where counsel raised questions about whether video evidence might be AI-generated.

Around the same time, the American Bar Association’s Advisory Committee on Evidence Rules formally began studying the issue.

- The Critical Threshold (2024–2025)

AI-generated content didn’t stay academic for long. In 2024, an Arup engineering firm incident, involving an AI-generated spoof video call that authorized fraudulent wire transfers totaling $25 million.

The legal system started responding.

In 2025, Louisiana passed HB 178, creating the first state-level AI evidence verification framework.

At the federal level, the U.S. Advisory Committee on Evidence Rules proposed Rule 707, focused on machine-generated evidence.

Where Things Stand in 2026

As of early 2026, deepfake and AI-video regulation has accelerated nationwide:

- 46 states have passed some form of deepfake legislation.

- Since 2022, 169 laws have been enacted, and 146 bills were introduced in 2025 alone.

- Federal Rule 707 is open for public comment through February 16, 2026.

Enhancing Video Verification With AI

The most effective way to detect AI-generated video is to use AI itself.

This is because deepfakes are created by machine learning systems that leave behind subtle digital patterns.

Those patterns are too small or complex for the human eye to notice. But they can be detected by other algorithms.

A reliable AI video verification system can analyze multiple layers of a video at once, including:

- Frame-level analysis checks each frame for visual errors like strange textures, lighting mismatches, or blending problems around faces.

- Temporal coherence analysis looks at motion across frames to find unnatural jumps or inconsistent movement.

- Facial landmark tracking monitors eye movement, blinking, and facial shape to detect unnatural changes.

- Audio-visual synchronization testing checks if lip movements match the spoken words exactly.

- Audio forensics analyzes the voice for signs of cloning, such as unusual sound patterns or robotic tones.

- Metadata and compression analysis examines hidden file data is vital for legal fraud detection to see if it matches the original recording details.

Educating Legal Teams on Video Fraud

Since AI can now mimic human behavior with terrifying accuracy, law firms are using automated evidence analysis and a mix of traditional tactics to stay ahead.

| Category | Method | Deskripsi |

| Official Ways | CLE (Continuing Legal Education) Credits | Mandatory or elective courses teaching lawyers how to authenticate digital evidence, understand deepfakes, and meet evidentiary standards. |

| Court-Appointed AI Experts | Establishing certified AI forensic specialists, similar to DNA experts, to evaluate disputed video evidence in court. | |

| Non-Official Ways | Internal Red-Teaming | Hiring cybersecurity professionals to test whether fake or manipulated evidence can slip through firm intake systems. |

| “Vibe Check” Protocol | Training staff to spot common deepfake warning signs like unnatural blinking, distorted features, or lip-sync issues before escalating for forensic review. |

Tools and Technology for Evidence Validation

In 2026, we’ve moved past simple eyes-on inspection.

Because AI is now capable of fooling even the most seasoned investigators, legal teams use a specific set of tools to verify that what they are seeing is the absolute truth.

The Technology Behind Evidence Validation

- Deepfake Detectors: These are specialized software programs that scan for GAN artifacts. These are microscopic, mathematical patterns or noise left behind by the AI models that generate synthetic faces. While a human sees a face, the detector sees a digital signature that doesn’t belong.

- TruthScan is a deepfake detector that scans videos frame by frame for AI signs, like unusual blinking, odd facial shapes, or pixel errors where a fake face is added.

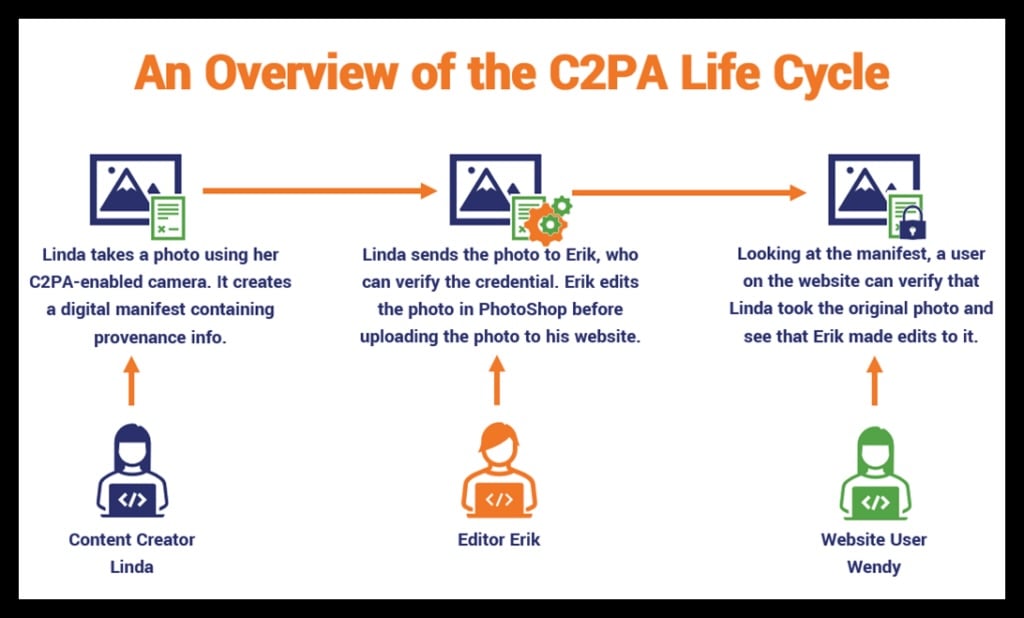

- Blockchain Timestamps: To prove a video hasn’t been touched since the moment it was recorded, many agencies now use the C2PA standard. When a video is recorded on a compliant device, a unique digital fingerprint (hash) is created and stored on a blockchain.

- TruthScan can check if this fingerprint matches the video, showing whether the file was edited or the chain of custody was broken.

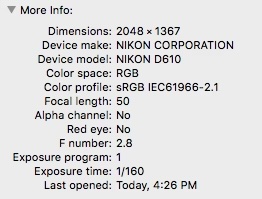

- Metadata Forensic Tools: Every digital file has a birth certificate known as Exif data. These tools check to see if a file was re-saved in AI editing software or if the location data (GPS) has been spoofed. If a video claims to be from a store camera but the metadata shows it was exported from a video editor, you have a problem.

- Amped Software and TruthScan examine hidden file data (Exif, headers) to see if the video was edited or AI-processed.

Trends in AI Video Evidence

Here are the three trends of 2026.

- Generative Adversarial Forensics: AI vs. AI

The most advanced way to catch a deepfake in 2026 is to use the same technology that created it. This is known as Generative Adversarial Forensics.

- One AI (the generator) tries to create a fake, while another AI (the discriminator) tries to catch it. In the courtroom, tools like TruthScan can be the ultimate discriminator.

- Contoh: A plaintiff submits a video of a CEO making a verbal contract. TruthScan scans the video using its own adversarial models to detect GAN artifacts. If the software flags a 98% synthetic probability the evidence is likely a forgery to trick human eyes.

- Audio-Video Sync Analysis (The 0.01ms Rule)

Humans can usually spot a delay if a video’s audio is off by about 40–80 milliseconds. However, 2026 deepfakes are often nearly perfect.

- Modern AI forensic tools now look for a 0.01-millisecond delay between the phonetic sound of a word and the mechanical movement of the lips.

- Contoh: In a 2026 harassment case, a defendant claimed a video was forged. Forensic automated evidence analysis showed that the “M” and “B” lip shapes were 0.02ms out of sync with the audio. This microscopic error proves the voice was cloned and layered over a different video, leading to a dismissal.

- Cheapfakes vs. Deepfakes

While deepfakes use high-end AI, cheapfakes are the most common form of video fraud.

- A Deepfake creates a reality that never happened (like a face-swap). A Cheapfake takes real footage and changes the context or intent using simple tools.

- Example (The Slow-Down): A video of a politician appearing drunk or impaired can be created simply by slowing the footage down by 20% and adjusting the audio pitch.

- Example (The Re-Context): Footage from a 2022 protest in another country is posted in 2026 as live evidence of a local riot. Legal teams now use TruthScan’s metadata analysis to prove the video’s actual birth date.

How TruthScan Protects Legal Video Evidence

TruthScan ensures that every frame you present in court is authentic, verified, and legally defensible.

The platform streamlines AI video verification through the following benefits:

Automated Audit Logs & Chain-of-Custody Documentation

- Court-Ready Reliability: Generates PDF and JSON reports that document every verification step with precise confidence scores and timestamps.

- Top-Tier Compliance: Rest easy knowing your data stays secure and meets strict global standards like SOC 2, ISO 27001, and GDPR.

- Flexible Data Control: Keep sensitive evidence within your required jurisdiction with on-premise or VPC deployment options designed for highly regulated industries.

Batch Processing & API Integration for Legal Workflows

- Massive Time Savings: Process thousands of files from discovery packages or surveillance sets simultaneously using bulk storage imports (S3/GCS).

- Seamless E-Discovery: Streamline your operations by integrating verification directly into your existing software via webhooks and APIs.

- Eliminate Manual Errors: Automate the first pass of evidence screening so your team can focus on strategy instead of tedious file-by-file checking.

Real-Time Verification for Depositions and Remote Hearings

- Stop Impersonation: Use live streaming endpoints to detect if a deponent is using deepfake filters or voice clones during a remote hearing.

- Maintain Court Integrity: Flag visual or audio anomalies in real-time, preventing fraudulent testimony from entering the record.

- Peace of Mind: Provide your clients and the court with an extra layer of security that guarantees the person on the screen is exactly who they claim to be.

Learn More About Securing AI Video Evidence

Protect your firm from the risks of manipulated media. TruthScan’s deepfake detector and AI video detector technology provide the ultimate solution for e-discovery and legal verification.

Ready to see TruthScan in action?

- Visit truthscan.com to get free 20,000 credits.

- For enterprise inquiries, API integration, or custom deployment options, reach out to their team at truthscan.com/contact.